Amazing launching of new AWS GPU-Equipped EC2 P4 Instances in Machine Learning & HPC

The Amazon EC2 team has been providing our customers with GPU-equipped instances for nearly a decade. The first-generation Cluster GPU instances were launched in late 2010, followed by the G2 (2013), P2 (2016), P3 (2017), G3 (2017), P3dn (2018), and G4 (2019) instances. Each successive generation incorporates increasingly-capable GPUs, along with enough CPU power, memory, and network bandwidth to allow the GPUs to be used to their utmost. Now, AWS has just launched the new one – AWS EC2 P4 instances (p4d.24xlarge).

New EC2 P4 Instances

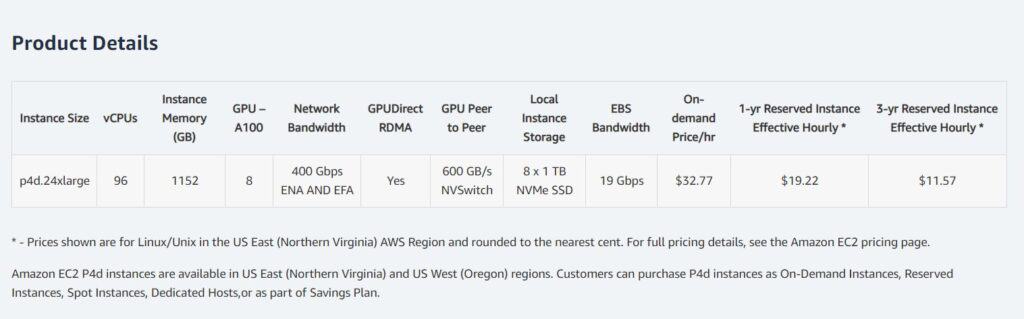

Today I would like to tell you about the new GPU-equipped P4 instances. These instances are powered by the latest Intel® Cascade Lake processors and feature eight of the latest NVIDIA A100 Tensor Core GPUs, each connected to all of the others by NVLink and with support for NVIDIA GPUDirect. With 2.5 PetaFLOPS of floating-point performance and 320 GB of high-bandwidth GPU memory, the instances can deliver up to 2.5x the deep learning performance, and up to 60% lower cost to train when compared to P3 instances.

P4 instances include 1.1 TB of system memory and 8 TB of NVME-based SSD storage that can deliver up to 16 gigabytes of read throughput per second.

Network-wise, you have access to four 100 Gbps network connections to a dedicated, petabit-scale, non-blocking network fabric (accessible via EFA) that was designed specifically for the P4 instances, along with 19 Gbps of EBS bandwidth that can support up to 80K IOPS.

What can EC2 P4 Instances do?

Researchers, data scientists, and developers can leverage P4d instances to train ML models for use cases such as natural language processing, object detection and classification, and recommendation engines, as well as run HPC applications such as pharmaceutical discovery, seismic analysis, and financial modeling.

Unlike on-premises systems, customers can access virtually unlimited compute and storage capacity, scale their infrastructure based on business needs and spin up a multi-node ML training job or a tightly coupled distributed HPC application in minutes, without any setup or maintenance costs.

EC2 UltraClusters

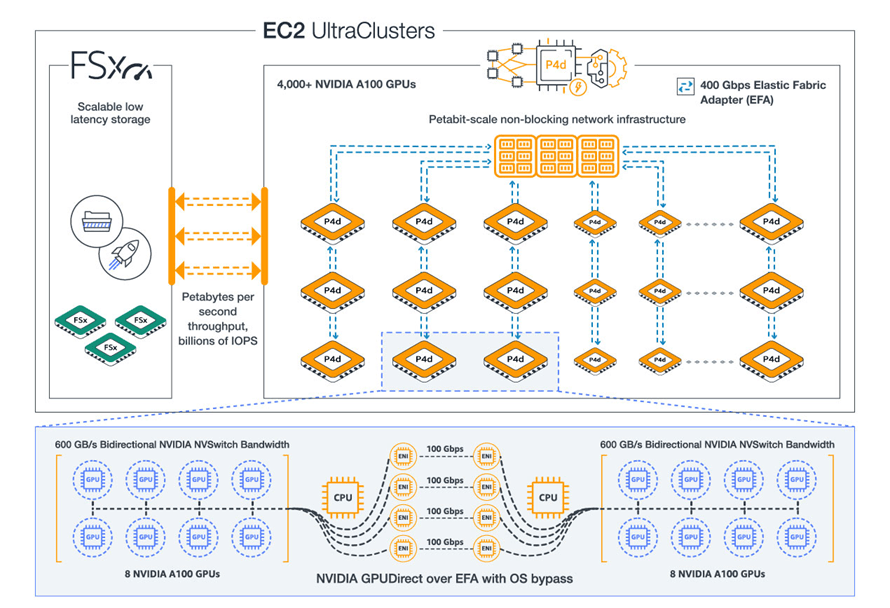

The NVIDIA A100 GPUs, support for NVIDIA GPUDirect, 400 Gbps networking, the petabit-scale network fabric, and access to AWS services such as S3, Amazon FSx for Lustre, and AWS ParallelCluster give you all that you need to create on-demand EC2 UltraClusters with 4,000 or more GPUs:

These clusters can take on your toughest supercomputer-scale machine learning and HPC workloads: natural language processing, object detection & classification, scene understanding, seismic analysis, weather forecasting, financial modeling, and so forth.

High Scale ML Training and HPC with EC2 P4d UltraClusters

EC2 UltraClusters of P4d instances combine high performance compute, networking, and storage into one of the most powerful supercomputers in the world. Each EC2 UltraCluster of P4d instances comprises more than 4,000 of the latest NVIDIA A100 GPUs, Petabit-scale non-blocking networking infrastructure, and high throughput low latency storage with FSx for Lustre.

Any ML developer, researcher, or data scientist can spin up P4d instances in EC2 UltraClusters to get access to supercomputer-class performance with a pay-as-you-go usage model to run their most complex multi-node ML training and HPC workloads.

Now Available

P4 instances are available in one size (p4d.24xlarge) and you can launch them in the US East (N. Virginia) and US West (Oregon) Regions today. Your AMI will need to have the NVIDIA A100 drivers and the most recent ENA driver (the Deep Learning Containers have already been updated).

If you are using multiple P4s to run distributed training jobs, you can use EFA and an MPI-compatible application to make the best use of the 400 Gbps of networking and the petabit-scale networking fabric.

You can purchase P4 instances in On-Demand, Savings Plan, Reserved Instance, and Spot form. support for use of P4 instances in managed AWS services such as Amazon SageMaker and Amazon Elastic Kubernetes Service is in the works and will be available later this year.

More from Dave Brown about P4 new instance:

Source: AWS News Blog

Gathered by @cloudspacevn